Twitter, Threads, and the misunderstood nature of engineering

Are there any lessons other tech companies can draw from how Twitter is failing?

When Musk took over Twitter at the end of October last year, the world dichotomized into two camps: people who thought he’d use his engineering and turnaround magic and inject growth, speed and revenue in the company, and people who thought the service would cease to exist within days.

As is usually the case, what played out is more nuanced and there are many lessons to draw from that particular moment. First, that it’s usually best to avoid predictions in the heat of the moment, if you can avoid it. Second, if you’re taking predictions, it’s a good idea to listen to experts (we’ll get to this in a minute).

Now, after Twitter spending nine months on mass layoffs, an insane political shift, misplaced product bets and constant service failures, Facebook finally launched Threads to some fanfare. Meaning we have essentially a natural A/B test on our hands of Elon’s approach to running Twitter vs Mark’s. And so maybe now is a more useful time to attempt to draw some learnings.

First, the obvious point: there are many, many precautionary tales from Twitter – foremost to many is maybe the shift to extreme right politics, the quite sickening content moderation changes and so on. But when your decision making on that front means that you’re left with the leader of ISIS as the one to speak up for your free speech agenda, I’m not sure there are many cautionary tales that’ll extrapolate as lessons to other companies.

So if we set that aside, I think there are also big engineering lessons, and I think some of them extrapolate to problems of focus and headcount that many other companies grapple with today. I want to focus on the biggest one I’ve taken from this: the nature of complex systems engineering work, and how much people misunderstand it.

If we start in November, one of the most baffling reactions to Twitter’s second round of layoffs was that it would lead to the immediate failure of the service, with people at one time waving goodbye to each other as if it was the last night of Twitter.

I remember thinking at the time that to believe that, you’d have to believe that software engineering operates on some sort of production line principle: as soon as engineers stop coding, the system stops. Much like with the current WGA strike, as soon as the writers stop writing, content production stops. Or if the baker stops baking, there’s no bagels in the morning. In other words: a seizure of work leads to an immediate and catastrophic failure.

This is not, plainly, how software engineering works. Engineering, fundamentally, is problem solving in the service of invention of technology. The key here is that the output of engineering is a durable product that exists somewhat independent of its creators. And so if the engineers don’t show up one day, the product still keeps going. In fact, a marker of pride in engineering is how well you as an engineer can make that statement hold true: if you architect a piece of technology that requires your constant hand holding to not fall over, you’ve likely done a shoddy job.

I think what we’ve gotten to see instead is infinitely more fascinating than immediate catastrophe – it’s an actual case study in how complex systems fail.

Complexity and internet scale engineering

Doing modern, internet scale software engineering is about creating and managing complex systems. Despite the hype (and some truth) about cloud, PaaS, ML etc. making coding 10x faster, designing a system like Twitter to manage up to hundreds of millions of people concurrently writing and distributing tweets is fucking hard.

The lesson to draw from the fact that Meta could launch something like Threads in 3-4 months is not that building a Twitter clone at scale is suddenly trivial. It is that Meta at its best is a frighteningly competent technology organization and that 20 years of smart platform investment can pay off in dividends.

So what does this have to do with complexity? Complex systems are systems where cause and effect aren’t readily apparent, due to dynamics in the relationships between system components. This sort of cause and effect relationship arises all the time in internet-scale software as you’re trying to cope with the scale of work you’re designing for.

To make this more concrete, we need a quick digression - how do you build internet services, and how do you end up with a complex system?

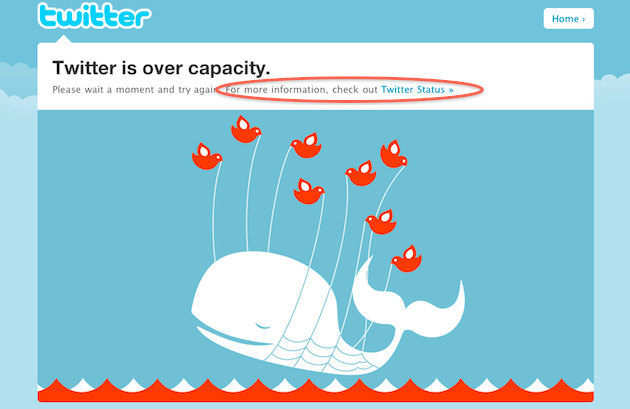

The first thing to understand is that any naive implementation of Twitter – say, a monolithic system taking tweets, inserting them into a central database, and querying that database every time anyone generates their timeline – will mercilessly fail under load, as the original fail whale demonstrated.

So to cope with this, you break up the problem. One system takes in tweets, another one distributes it out to subscribers, a third system aggregates all tweets into a timeline. All of a sudden you’ve uncoupled concerns and enabled the independent sharding and scaling of components of your architecture. However, by doing this, you move from a simple system, to a complicated system. Instead of one simple piece (or maybe two - a service and a database), there are now at least three pieces.

Over time, as the product meets the world and requirements evolve, you start adding pieces to do things like trending topics, image upload and video playback. So now your system has 6 components. And then you want to understand your users, moderate them, sell ads. Suddenly you’ve added another 20 components. And as you add more components, you find yourself needing to do better load balancing, routing, service discovery and other infrastructure concerns.

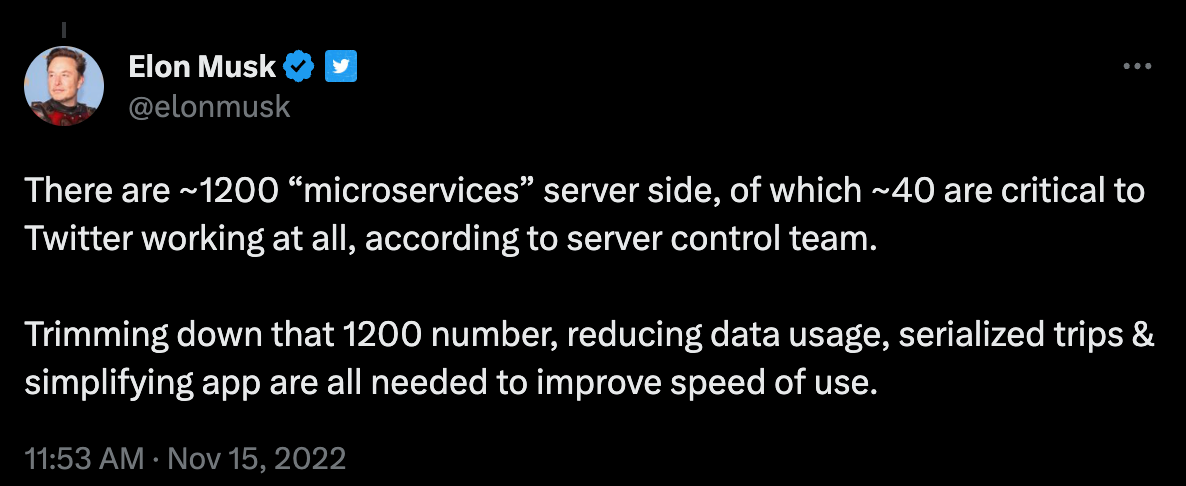

On and on it goes. And with each component you add, you not only introduce more components to your system, you introduce more relationships between these components. And all of a sudden, with only 50 components, your system has well over a thousand communication routes.

How complex systems fail

This necessary evolution leads us back to the point of how complex systems fail.

The reality of even a small system with 50 components is that no one truly knows how it works. And in reality, these systems are much larger than just 50 components. Elon himself said Twitter has 1200 services, leading to 720,000 potential communication routes. And so even if you are a genius, there is simply no way you can keep that level of complexity in your head (Francis Crick, who uncovered the double helix structure of DNA, reportedly micro-dosed LSD to help him manage to keep some of the complexity of DNA in his head at one time).

So what we’ve observed with Twitter is the myriad ways in which degradation in this graph has led to systems failure. In the beginning, these failures were small - things like replies coming out of order and minor features sometimes not working.

Gradually, as knowledge about the systems continue to leave Twitter, and as a patchwork of hacky fixes means individual components start deteriorating, the issues have become more severe. The service is regularly randomly down, reads had to be rate limited due to self-inflicted denial of service attacks and so on. And I expect that this severity cascade will continue. Likely we’ll see an even worse failure in the next 2-3 months as Elons urge to compete presses up against a straining and poorly understood infrastructure.

The other and maybe less obvious way in which Twitter has failed technically is their continued inability to ship anything complex.

When Elon took over, there was a hope that he could inject energy into an organization people claimed was mismanaged and unable to ship. And to some extent he has (see aforementioned policy change and radicalization). But while old Twitter couldn’t ship due to indecisive management, new Twitter can’t ship anything of note because no one, fundamentally, understands how it works.

That leads to things like bragging about adding view counts and 2x playback speed, fiddling coefficients in the ranking system and so on. These are surface level changes, which seem to be the only real changes that can be delivered. Anything that requires any type of broader intervention in the system is probably beyond the reach of the team left (as was depressingly shown where engineers were begging for help from ex-Twitter employees on the anonymous forum Blind).

This isn’t a knock on the team left by the way, any given group of engineers stuck in this situation would be doomed.

The latency from fucking around to finding out

To anyone who’s interested in complex system failure, these last nine months have been first confusing, then educating and finally vindicating. Because of the misunderstood nature of engineering, as the immediate doom of the platform didn’t happen, there were claims that cutting R&D by 90% was a masterstroke, and that they could run the platform on a skeleton crew.

That led to some engineers, myself included, doubting their priors. If all of these smart VC people are doing victory laps about the immediate cost savings, and the service isn’t degrading, maybe complex system design isn’t as hard as I’ve been trained to know.

Instead, what see now is what is actually happening: there is lead time between when you turn the knob in the shower, and when the faucet starts raining ice on you. But the cold shower will inevitably come. And so, as expected we’re now seeing Twitter waning, as the lag time between the fucking around and the finding out is catching up to them.

Enter Meta

Piling on pain is the way that Meta has exploited this cascading failure. I said before that I think Meta at its best is a very impressive engineering organization. The fact that they could stand up a Twitter competitor and scale it to the level they had in the time it takes some companies to ship a slide deck, tells me two things.

The obvious one is that there are certain things a founder-CEO can do that no one else can, and that becomes apparent when quick pivots and aggressive investment is needed. But the more interesting engineering lesson is how much platform investments can come back and compound for you.

To state the obvious: the Threads codebase will be filled with tons of hacks and shortcuts. Even despite that, the fact that they could tap into a platform that offered them mature networking and compute, scalable queues and databases, access to a social graph and core graph embeddings, content moderation, experimentation, compliance and so on.

It’s hard to understate how much each of these are multi-year investments that are paying off here in a big way. Yes, some of these components are now core cloud primitives which anyone can buy off the shelf from Amazon or Google (as long as they, you know, pay their GCP bills) and no one should build from scratch. But in all, the configuration and unique nature of these capabilities used right is, as evidenced, a superpower.

What to take away

Now does this mean that Threads will inevitably be a success? I don’t know. But I do know that we’ve already passed the last day in which Twitter could’ve made any plausible argument that they were leaders in even their niche of social, and that Threads will out-execute from this point on. Even if Meta loses focus entirely, stemming and reversing a cascading complex systems failure will keep Twitter from doing anything meaningful for at least the foreseeable future.

Finally then – Why does this matter to anyone outside of Twitter and Threads? I think it’s this. As we’re leaving the zero interest rate world behind us, lots of companies are grappling with getting to profitability, and doing so by reducing their headcount. I think it’s reasonable to say that many tech companies have been too bloated for the problem space they’re going after. But there are ways to attempt to rectify that that’ll help you, and there are ways to do it that won’t. And I think the current arc of Twitter and Threads gives us a good way to turn a mirror onto this decision making process as companies figure out how to optimize. Who do you want to be? Twitter, or Threads?